Posts Tagged graphics

Lightspark 0.4.4 released

Posted by Alessandro Pignotti in Lightspark on August 29, 2010

Lightspark 0.4.4 has been released today. Thanks a lot to all the people that made this release possible. Beside the usual amount of bug fixes several new features have been included

- Localization support (using gettext)

- ActionScript exception handling support

- More robust network handling

- Streams controls (Play/Pause/Stop)

It should be noted that, although now video streams controls are supported they’ll be not usable in most YouTube videos as mouse event dispatching to controls is still clobbered by missing masking support.

Lightspark now supports localized error messages, but we miss translations! So I’d like to invite any user (non developers included) willing to help Lightspark to contribute the translation for his/her native language.

I’d also like to give some insight what is being worked on for the next release (0.4.5). First of all the pluginized audio backend is now mature enough to be merged upstream, this is the first step toward support for multiple audio backends. That said anyway Lightspark will always focus on functionality and not on the amount of backends offered. We’ll work to offer a very small number of fully working backends.

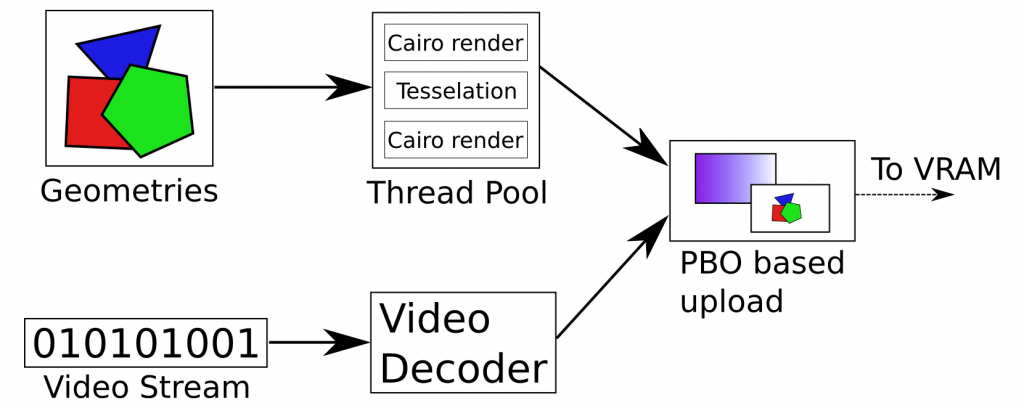

In the mean time we’re also discussing a new faster and more powerful graphics architecture. My proposal is a mixed software/hardware rendering pipeline, somehow inspired by modern compositing window mangers. Static (defined in the SWF file) and dynamic (generated using ActionScript code) geometries will be rendered in software using cairo and exploiting the thread pool to be scalable on multi core architectures. The resulting surfaces and decoded video frames (if any) will be uploaded using Pixel Buffer Objects to offload the work to the video card (this usually involves a DMA transfer). OpenGL will then be used to blit the various rendered components on screen, while applying filters, effects and blending.

That’s all folks. As always testing from as many people as possible is critical for the success of the project, so please try out this release and report any crashes/weird issues and anything you don’t like. I’d like to put an emphasis about this: never assume a bug is already known. If you hit a crash take a look at launchpad bug tracker. If your issue is not already reported, please do it!

The quest for graphics performance: part I

Posted by Alessandro Pignotti in Coding tricks on June 27, 2009

Developing and optimizing Lightspark, the modern Flash player, I’m greatly expanding my knowledge and understanding of GPU internals. In the last few days I’ve managed to find out a couple of nice tricks that boosted up performance and, as nice side effects, saved CPU time and added features ![]()

First of all, I’d like to introduce a bit of the Lightspark graphics architecture

The project is designed from the ground up to make use of the features offered by modern graphics hardware. Namely 3D acceleration and programmable shaders. The Flash file format encodes the geometries to be drawn as set of edges. This representation is quite different from the one understood by GPUs. So the geometries are first triangulated (reduced to a set of triangles). This operation is done on the CPU and is quite computationally intensive, but the results are cached, so overall this does not hit performance.

Moreover Flash offer several different fill styles that should be applied on geometry, for example solid color and various kind of gradients. Lightspark handles all those possibilities using a single fragment shader, a little piece of code that is invoked on every pixel to compute the desired color. Of course the shader has to know about the current fill style. This information along with several other parameters could be passed with different methods. More on this on the next issue.

There is one peculiar thing about the shader though, let’s look at a simple pseudo code:

gl_FragColor=solid_color()*selector[0]+linear_gradient()*selector[1]+circular_gradient()*selector[2]...;

Selector is a binary array, the only allowed values are zero or one. Moreover only one value is one. This means that the current fragment (pixel) color is computed for every possible fill style and only afterward the correct result is selected. This may look like a waste of computing power, but it is actually more efficient than something like this:

if(selector[0])

gl_FragColor=solid_color();

else if(selector[1])

gl_FragColot=linear_gradient();

...

This counter intuitive fact comes from the nature of the graphics hardware. GPUs are very different from CPUs and are capable of cruching tons of vectorial operations blindingly fast. But they totally fall down on their knees when encountering branches in the code. This is actually quite common on deeply pipelined architecture which misses complex branch prediction circuitry, not only GPUs but also number crunching devices and multimedia monsters such as IBM Cell. Keep this in mind when working on such platforms.