Archive for category Insane Projects

RFC: is OpenGL not the Right Thing (TM)?

Posted by Alessandro Pignotti in Insane Projects, Lightspark on October 2, 2010

It’s late at night and I’m back in Pisa for my last year of master. And, as often happens, a weird idea struck my mind. What if OpenGL is not the right thing for Lightspark? No, I’m not talking about dropping hardware accelerated rendering as that’s surely the right way to go, but using OpenGL really looks unnatural. In the design of the advanced graphics engine OpenGL is being basically used only to upload images rendered with cairo to the VRAM, and to blit and composite all the rendered chunks together... do we really need all the OpenGL complexity to do this??

Well... OpenGL is basically the only thing we have, the only way to talk with the graphics hardware. But, here it comes the gallium project! As gallium splits the API from the driver it could be possible, in theory, to write a specialized gallium state tracker to do only the work we need... and maybe do it better.

I’m writing here first because I’m not (yet) experienced enough with the gallium platform to know if the idea is sane, and second because I somehow feel the same approach could be useful for other apps... for example Lightspark and compositing window managers have similar needs. So I’d like to have some feedback about writing a small API and a gallium state tracker to do:

- DMA accelerated transfers of rendered image data

- Blitting and compositing of such data on screen

- Notify the application when asynchronous work (such as DMA transfers) has ended (BTW: what’s the right way of doing this in OpenGL?)

- Enqueue to-be-uploaded-to-vram images and have them sequentially transfered

- Apply simple but programmable (shader-like) transformation to pixel data

Big disclaimer: I’ve not yet started working on this idea. I’ve not even seriously though about it’s feasibility. I’d just like to have some feedback on this.

Lightspark 0.4.0 released

Posted by Alessandro Pignotti in Insane Projects, Lightspark, Uncategorized on May 30, 2010

Just a quick update. I’ve released version 0.4.0 of Lightspark, a free flash player implementation. This release was focused on improving stability, so all the crashes found by many testers should be fixed now. Thanks a lot for testing, several issues were related to particular graphics hardware and I would have never found them without your collaboration. Please keep testing and reporting any issue.

Just a quick update. I’ve released version 0.4.0 of Lightspark, a free flash player implementation. This release was focused on improving stability, so all the crashes found by many testers should be fixed now. Thanks a lot for testing, several issues were related to particular graphics hardware and I would have never found them without your collaboration. Please keep testing and reporting any issue.

Now focus shift on YouTube support, which was lost after one of the last update of YouTube’s infrastructure. And believe me, we’re not far! I’m attaching a screen shot of the current status (in GIT master) as a proof. Full support will be delivered with release 0.5.0

The quest for graphics performance: part II

Posted by Alessandro Pignotti in Coding tricks, Insane Projects on March 16, 2010

I’d like to talk a bit about the architecture I’ve using to efficiently render the video stream in Lightspark. As often happens the key in high performance computing is using the right tools for each job. First of all video decoding and rendering are asynchronous and executed by different threads.

Decoding itself it’s done by the widely known FFMpeg, no special tricks are played here. So the starting condition of the optimized fast path is a decoded frame data structure. The data structure is short lived and it is overwritten by the next decoded frame, so it must be copied to a more stable buffer. The decoding thread maintains a short array of decoded frames ready to be rendered, to account for variance in the decoding delay. The decoded frame is in YUV420 format, this means that resolution of color data is one half of the resolution of the luminance channel. The data is returned by FFmpeg as 3 distinct buffers, one for each of the YUV channels, so we actually save 3 buffers per frame. This copy is necessary and it’s the only one that will be done on the data.

Rendering is done using a textured quad and texture data is loaded using OpenGL Pixel buffer objects (PBOs). PBOs are memory buffers managed by the GL and it’s possible to load texture data from them. Unfortunately they must be explicitly mapped to the client address space to be accessed, and unmapped when the updated. The advantage is that data transfer between PBOs and video or texture memory will be done by the GL using asynchronous DMA transfers. Using 2 PBOs it’s possible to guarantee a continuous stream of data to video memory: while one PBOs is being copied to texture memory by DMA, new data is been computed and transferred to the other using the CPU. This usage pattern is called streaming textures.

In this case such data is the next frame, taken from the decoded frames buffer. Textures data for OpenGL must be provided in planar form. So we must pack a 1-buffer-per-channel frame in a single buffer. This can be done in a zero-copy fashion using instruction provided by the SSE2 extension. Data is loaded in 128 bit chunks from each of the Y, U and V channels, then using register only operations it is correctly packed and padded. Results are written back using non-temporal moves. This means that the processor may feel free to postpone the actual commitment of data to memory, for example to exploit burst transfers on the bus. If we ever want to be sure that the changes has been committed in memory we have to call the sfence instruction. For more information see the Intel reference manuals on movapd, movntpd, sfence, punpcklb.

The result is a single buffer with the format YUV0, padding has been added to increase texture transfer efficiency, as the video cards internally works with 32-bit data anyway. The destination buffer is one of the PBOs so, at the end of the conversion routine, data will be transferred to video memory using DMA.

Using the streaming texture technique and SSE2 data packing we managed to efficiently move the frame data to texture memory, but it’s still in YUV format. Conversion to the RGB color space is basically a linear algebra operation, so it’s ideal to offload this computation to a pixel shader.

Lightspark gets video streaming

Posted by Alessandro Pignotti in Insane Projects on March 15, 2010

Just a brief news. It’s been a long way, and today I’m very proud to announce video streaming support for Lightspark, the efficient open source flash player. Moreover, performance looks very promising, I’m not going to publish any results right now as I’d like to do some testing before. Anyway, Lightspark seems to be outperforming Adobe’s player by a good extent, at least on linux.

In the next post I’ll talk a bit about some performance tricks that made it possible to reach such result.

Lightspark’s news

Posted by Alessandro Pignotti in Insane Projects on February 23, 2010

Lightspark progresses are never been so good. The last achievement was to correctly load, execute and partially render the YouTube player. As you may have seen YouTube has recently switched to Flash 10 and ActionScript 3.0 to serve some HD content, while keeping the old AS2 based player for lower quality videos. The old player is supported by Gnash but, until now, there where no open source alternatives to play newer, high definition content. As Lightspark AS3 engine matures, that gap is almost closed. Stay tuned, as I’m planning to release a new technology demo very soon.

UPDATE: Demo tarball released on sourceforge

Extreme FLEXibility

Posted by Alessandro Pignotti in Insane Projects on January 26, 2010

Although there has been no official news about Lightspark for several months, i’ve been doing a great deal of work under the hood. As my bachelor thesis, I’ve mostly completed and throughly tested my LLVM based Actionscript 3 .0 JIT engine and, during the last days, I’ve been working on polishing a bit the Virtual Machine. I’m proudly announcing that, in some days, a new technical demo of Lightspark will be released, but this time we’re not talking about basic examples. Lightspark is now mature enough to run a simple application based on Flex.

Flex is a rich open source framework written in Actionscript and developed mainly by Adobe. Even if right now the test application features only a progress bar and a square, there is a lot of stuff being done by the framework under the hood.

If the framework works it means that the engine is now stable enough to move from a pre-alpha to an alpha status. The design is also now satisfying enough for me to allow other people to join the project and work on on subsystems without knowing the internal details of everything. As an added bonus preliminary support for the Windows platform will be included in the release.

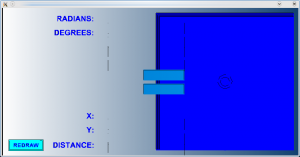

The screenshot above is the result of the execution of my test application, for curious people the flash file is generated using the mxmlc compiler, from the following source file

<?xml version="1.0" encoding="utf-8"?>

<mx:Application

xmlns:mx="http://www.adobe.com/2006/mxml"

horizontalAlign="center" verticalAlign="middle">

<mx:VBox x="0" y="0" width="201" height="200" backgroundColor="0x0080C0" alpha="0.8"/>

</mx:Application>

Lightspark second technical demo announcement

Posted by Alessandro Pignotti in Insane Projects on June 13, 2009

I’m currently finishing some last cleanups and enhancements before releasing a second technical demo of the Lightspark Project. Much time is passed from the first demo, and the project is growing healty. This release aims at rendering the following movie, selected from adobe demo. The results may not be very impressive. But many things are going on under the hood.

The most interesting feature in this release are:

- GLSL based rendering of fill styles (eg. gradients)

- LLVM based ActionScript execution. Code is compiled just in time

- A few tricks are also played to decrease the stack traffic tipical of stack machines.

- First, although simple, framerate timing

- Framework to handle ActionScript asynchronous events. Currently only the enterFrame event works, as the input subsystem is not yet in place. But stay tuned, as I’ve some nice plan about that.

The code will be released in a couple of more days, or at least I hope so ![]()

ActionScript meets LLVM: part II

Posted by Alessandro Pignotti in Insane Projects on June 10, 2009

Just a quick update. The nice tricks I’m playing to build a fast ActionScript VM using LLVM are now the topic of my bachelor thesis, the completion of which will still need an handful of months. If you are interested in the development you may follow the git changelog here or contact me privately.

ActionScript meets LLVM: part I

Posted by Alessandro Pignotti in Insane Projects on May 17, 2009

One of the major challenges in the design of lightspark is the ActionScript execution engine. Most of the more recent flash content is almost completely build out of the ActionScript technology, which with version 3.0 matured enough to become a foundational block of the current, and probably future web. The same technology is going to become also widespread offline if the Adobe AIR platform succeedes as a cross platform application framework.

But what is ActionScript? Basically it is an almost ECMAscript complaiant language; the specification covers the language itself, a huge library of components and the bytecode format that is used to deliver code to the clients, usually as part of a SWF (flash) file.

The bytecode models a stack-machine as most of the arguments are passed on the stack and not as operands in the code. This operational description — although quite dense — requires a lot of stack traffic, even for simple computations. It should be noted that modern x86/amd64 processors employ specific stack tracing units to optimize out such traffic, but this is highly architecture dependent and not guaranteed.

LLVM (which stands fot Low-Level Virtual Machine) is on the other hand based on an Intermediate Language in SSA form. This means that each symbol can be assigned only one time. This form is extremely useful when doing optimization over the code. LLVM offers a nice interface for a bunch of feature, most notably sophisticated optimization of the code and Just-In-Time compilation to native assemply.

The challenge is: how to exploit llvm power to build a fast ActionScript engine.

The answer is, as usual, a matter of compromises. Quite a lot of common usage patterns of the stack-machine can be heavily optimized with limited work, for example most of the data pushed on the stack is going to be used right away! More details on this on the next issue...

The Lightspark Project, a modern flash player implementation

Posted by Alessandro Pignotti in Insane Projects on March 28, 2009

When some months ago Adobe released the complete SWF file format specification I though that it would be nice to develop a well designed open source flash player. Now I’ve been working for some time on this idea and I’ve recently relased the code on SourceForge.

When some months ago Adobe released the complete SWF file format specification I though that it would be nice to develop a well designed open source flash player. Now I’ve been working for some time on this idea and I’ve recently relased the code on SourceForge.

The project objectives are quite ambitious, as the flash specification are really complex. The project is designed to take advance of the feautures present on modern hadrware, so it not supposed to run on older machines. All the graphic rendering is done using OpenGL and in the future programmable shaders will be used to offload even more calculations on the GPU. Extensive multithreading is employed to make use of multicore and hyper-threading processors. I’ll write a more detailed post about some tricky and interesting part of the project soon.